This is “Experiments”, section 12.2 from the book Sociological Inquiry Principles: Qualitative and Quantitative Methods (v. 1.0). For details on it (including licensing), click here.

For more information on the source of this book, or why it is available for free, please see the project's home page. You can browse or download additional books there. To download a .zip file containing this book to use offline, simply click here.

12.2 Experiments

Learning Objectives

- Define experiment.

- Distinguish “true” experiments from preexperimental designs.

- Identify the core features of true experimental designs.

- Describe the difference between an experimental group and a control group.

- Identify and describe the various types of true experimental designs.

- Identify and describe the various types of preexperimental designs.

- Name the key strengths and weaknesses of experiments.

- Define internal validity and external validity.

Experiments are an excellent data collection strategy for those wishing to observe the consequences of very specific actions or stimuli. Most commonly a quantitative research method, experiments are used more often by psychologists than sociologists, but understanding what experiments are and how they are conducted is useful for all social scientists, whether they actually plan to use this methodology or simply aim to understand findings based on experimental designs. An experimentA method of data collection designed to test hypotheses under controlled conditions. is a method of data collection designed to test hypotheses under controlled conditions. Students in my research methods classes often use the term experiment to describe all kinds of empirical research projects, but in social scientific research, the term has a unique meaning and should not be used to describe all research methodologies.

Several kinds of experimental designs exist. In general, designs considered to be “true experiments” contain three key features: independent and dependent variables, pretesting and posttesting, and experimental and control groups. In the classic experimentThe effect of a stimulus is tested by comparing an experimental group to a control group., the effect of a stimulus is tested by comparing two groups: one that is exposed to the stimulus (the experimental groupThe group of participants who receive the stimulus in an experiment.) and another that does not receive the stimulus (the control groupThe group of participants who do not receive the stimulus in an experiment.). In other words, the effects of an independent variable upon a dependent variable are tested. Because the researcher’s interest lies in the effects of an independent variable, she must measure participants on the dependent variable before and after the independent variable (or stimulus) is administered. Thus pretesting and posttesting are both important steps in a classic experiment.

One example of experimental research can be found in Shannon K. McCoy and Brenda Major’s (2003)McCoy, S. K., & Major, B. (2003). Group identification moderates emotional response to perceived prejudice. Personality and Social Psychology Bulletin, 29, 1005–1017. study of people’s perceptions of prejudice. In one portion of this multifaceted study, all participants were given a pretest to assess their levels of depression. No significant differences in depression were found between the experimental and control groups during the pretest. Participants in the experimental group were then asked to read an article suggesting that prejudice against their own racial group is severe and pervasive, while participants in the control group were asked to read an article suggesting that prejudice against a racial group other than their own is severe and pervasive. Upon measuring depression scores during the posttest period, the researchers discovered that those who had received the experimental stimulus (the article citing prejudice against their same racial group) reported greater depression than those in the control group. This is just one of many examples of social scientific experimental research.

In addition to the classic experimental design, there are two other ways of designing experiments that are considered to fall within the purview of “true” experiments (Babbie, 2010; Campbell & Stanley, 1963).Babbie, E. (2010). The practice of social research (12th ed.). Belmont, CA: Wadsworth; Campbell, D., & Stanley, J. (1963). Experimental and quasi-experimental designs for research. Chicago, IL: Rand McNally. They are the Solomon four-group design and the posttest-only control group design. In the former, four groups exist. Two groups are treated as they would be in a classic experiment. Another group receives the stimulus and is then given the posttest. The remaining group does not receive the stimulus but is given the posttest. Table 12.2 "Solomon Four-Group Design" illustrates the features of each of the four groups in the Solomon four-group design.

Table 12.2 Solomon Four-Group Design

| Pretest | Stimulus | Posttest | No stimulus | |

|---|---|---|---|---|

| Group 1 | X | X | X | |

| Group 2 | X | X | X | |

| Group 3 | X | X | ||

| Group 4 | X | X |

Finally, the posttest only control group is also considered a “true” experimental design though it lacks any pretest group. In this design, participants are assigned to either an experimental or a control group. Individuals are then measured on some dependent variable following the administration of an experimental stimulus to the experimental group. In theory, as long as the control and experimental groups have been determined randomly, no pretest is needed.

Time, other resources such as funding, and even one’s topic may limit a researcher’s ability to conduct a true experiment. For researchers in the medical and health sciences, conducting a true experiment could require denying needed treatment to patients, which is a clear ethical violation. Even those whose research may not involve the administration of needed medications or treatments may be limited in their ability to conduct a classic experiment. In social scientific experiments, for example, it might not be equitable or ethical to provide a large financial or other reward only to members of the experimental group. When random assignment of participants into experimental and control groups is not feasible, researchers may turn to a preexperimental designExperimental design used when random assignment of participants into experimental and control groups is not feasible. (Campbell & Stanley, 1963).Campbell, D., & Stanley, J. (1963). Experimental and quasi-experimental designs for research. Chicago, IL: Rand McNally. However, this type of design comes with some unique disadvantages, which we’ll describe as we review the preexperimental designs available.

If we wished to measure the impact of some natural disaster, for example, Hurricane Katrina, we might conduct a preexperiment by identifying an experimental group from a community that experienced the hurricane and a control group from a similar community that had not been hit by the hurricane. This study design, called a static group comparisonAn experiment that includes a comparison control group that did not experience the stimulus; it involves experimental and control groups determined by a factor or factors other than random assignment., has the advantage of including a comparison control group that did not experience the stimulus (in this case, the hurricane) but the disadvantage of containing experimental and control groups that were determined by a factor or factors other than random assignment. As you might have guessed from our example, static group comparisons are useful in cases where a researcher cannot control or predict whether, when, or how the stimulus is administered, as in the case of natural disasters.

In cases where the administration of the stimulus is quite costly or otherwise not possible, a one-shot case studyAn experiment that contains no pretest and no control group. design might be used. In this instance, no pretest is administered, nor is a control group present. In our example of the study of the impact of Hurricane Katrina, a researcher using this design would test the impact of Katrina only among a community that was hit by the hurricane and not seek out a comparison group from a community that did not experience the hurricane. Researchers using this design must be extremely cautious about making claims regarding the effect of the stimulus, though the design could be useful for exploratory studies aimed at testing one’s measures or the feasibility of further study.

Finally, if a researcher is unlikely to be able to identify a sample large enough to split into multiple groups, or if he or she simply doesn’t have access to a control group, the researcher might use a one-group pre-/posttestAn experiment in which pre- and posttests are both taken but there is no control group. design. In this instance, pre- and posttests are both taken but, as stated, there is no control group to which to compare the experimental group. We might be able to study of the impact of Hurricane Katrina using this design if we’d been collecting data on the impacted communities prior to the hurricane. We could then collect similar data after the hurricane. Applying this design involves a bit of serendipity and chance. Without having collected data from impacted communities prior to the hurricane, we would be unable to employ a one-group pre-/posttest design to study Hurricane Katrina’s impact.

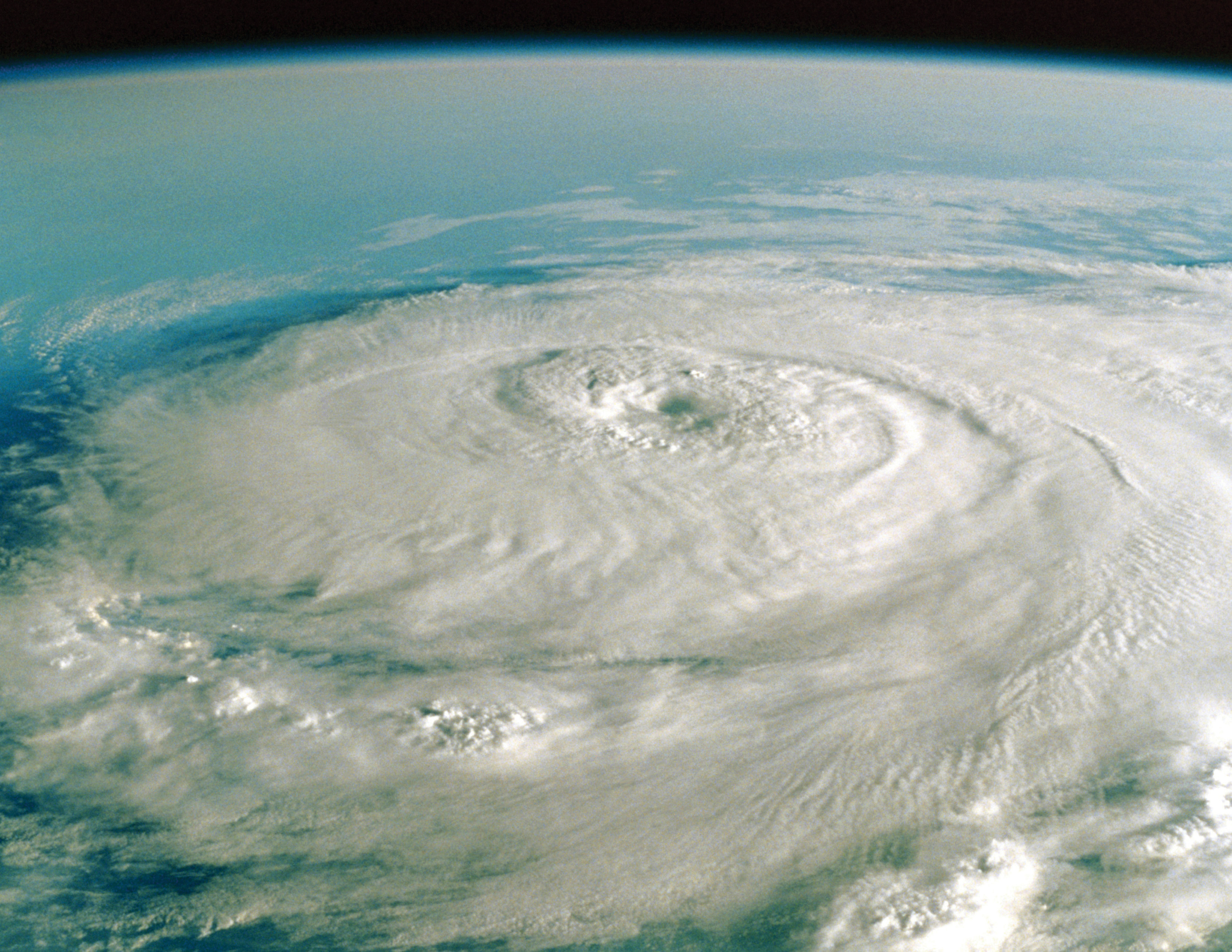

Figure 12.2

Researchers could use a preexperimental design to study the impact of natural disasters such as Hurricane Katrina.

© Thinkstock

Table 12.3 "Preexperimental Designs" summarizes each of the preceding examples of preexperimental designs.

Table 12.3 Preexperimental Designs

| Pretest | Posttest | Experimental group | Control group | |

|---|---|---|---|---|

| One-shot case study | X | X | ||

| Static group comparison | X | X | X | |

| One-group pre-/posttest | X | X | X |

As implied by the preceding examples where we considered studying the impact of Hurricane Katrina, experiments do not necessarily need to take place in the controlled setting of a lab. In fact, many applied researchers rely on experiments to assess the impact and effectiveness of various programs and policies. You might recall our discussion of the police experiment described in Chapter 2 "Linking Methods With Theory". It is an excellent example of an applied experiment. Researchers did not “subject” participants to conditions in a lab setting; instead, they applied their stimulus (in this case, arrest) to some subjects in the field and they also had a control group in the field that did not receive the stimulus (and therefore were not arrested).

Finally, a review of some of the strengths and weaknesses of experiments as a method of data collection is in order. A strength of this method, particularly in cases where experiments are conducted in lab settings, is that the researcher has substantial control over the conditions to which participants are subjected. Experiments are also generally easier to replicate than are other methods of data collection. Again, this is particularly true in cases where an experiment has been conducted in a lab setting.

As sociologists, who are especially attentive to how social context shapes social life, are likely to point out, a disadvantage of experiments is that they are rather artificial. How often do real-world social interactions occur in the same way that they do in a lab? Experiments that are conducted in applied settings may not be as subject to artificiality, though then their conditions are less easily controlled. Experiments also present a few unique concerns regarding validity. Problems of external validityThe extent to which the conditions of an experiment adequately represent those of the world outside the boundaries of the experiment. might arise when the conditions of an experiment don’t adequately represent those of the world outside the boundaries of the experiment. In the case of McCoy and Major’s (2003)McCoy, S. K., & Major, B. (2003). Group identification moderates emotional response to perceived prejudice. Personality and Social Psychology Bulletin, 29, 1005–1017. research on prejudice described earlier in this section, for example, the questions to ask with regard to external validity are these: Can we say with certainty that the stimulus applied to the experimental group resembles the stimuli that people are likely to encounter in their real lives outside of the lab? Will reading an article on prejudice against one’s race in a lab have the same impact that it would outside of the lab? This is not to suggest that experimental research is not or cannot be valid, but experimental researchers must always be aware that external validity problems can occur and be forthcoming in their reports of findings about this potential weakness. Concerns about internal validityThe extent to which we can be confident that an experiment’s stimulus actually produced the observed effect or whether something else caused the effect. also arise in experimental designs. These have to do with our level of confidence about whether the stimulus actually produced the observed effect or whether some other factor, such as other conditions of the experiment or changes in participants over time, may have produced the effect.

In sum, the potential strengths and weaknesses of experiments as a method of data collection in social scientific research include the following:

Table 12.4 Strengths and Weaknesses of Experimental Research

| Strengths | Weaknesses |

|---|---|

| Researcher control | Artificiality |

| Reliability | Unique concerns about internal and external validity |

Key Takeaways

- Experiments are designed to test hypotheses under controlled conditions.

- True experimental designs differ from preexperimental designs.

- Preexperimental designs each lack one of the core features of true experimental designs.

- Experiments enable researchers to have great control over the conditions to which participants are subjected and are typically easier to replicate than other methods of data collection.

- Experiments come with some degree of artificiality and may run into problems of external or internal validity.

Exercises

- Taking into consideration your own research topic of interest, how might you conduct an experiment to learn more about your topic? Which experiment type would you use, and why?

- Do you agree or disagree with the sociological critique that experiments are artificial? Why or why not? How important is this weakness? Do the strengths of experimental research outweigh this drawback?

- Be a research participant! The Social Psychology Network offers many online opportunities to participate in social psychological experiments. Check them out at http://www.socialpsychology.org/expts.htm.